The Day the Maps Went Blank: Unpacking the Cloudflare BYOIP BGP Outage of 2026

How a single empty string of code wiped 25% of Cloudflare's network. A deep dive into the 2026 BGP outage and the fragility of the web.

Imagine waking up, getting in your car, and turning on your GPS, only to find that every major highway has seemingly vanished from the map. You can’t get to work, the delivery trucks are stranded, and the world momentarily grinds to a halt.

On February 20, 2026, the digital equivalent of this scenario played out across the global internet. Cloudflare, a titan of web infrastructure that handles approximately 20% of all internet traffic, suffered a massive six-hour outage. Major platforms—from Uber and Workday to Minecraft and Wikipedia—suddenly became unreachable.

But this wasn’t the result of a sophisticated cyberattack by a nation-state, nor was it a physical severed cable at the bottom of the ocean. It was an internal configuration update. More specifically, it was a single, empty string of text in an API query that triggered a cascading failure across the globe.

Here is the deep dive into what happened, the technical unraveling of Cloudflare’s systems, the history of these digital blackouts, and how the internet must adapt to survive its own fragility.

When a core piece of BGP routing fails, the internet's "map" vanishes. The February 20 outage saw 25% of Cloudflare's BYOIP network accidentally wiped from global routing tables.

When a core piece of BGP routing fails, the internet's "map" vanishes. The February 20 outage saw 25% of Cloudflare's BYOIP network accidentally wiped from global routing tables.

The Foundation: BGP, BYOIP, and the Internet's Phonebook

To understand the sheer scale of the outage, we have to translate two of the internet's most critical, yet invisible, technical acronyms: BGP and BYOIP.

Think of the internet as a massive, sprawling collection of digital islands. To get data from your laptop (one island) to a server hosting a website (another island), you need a map. BGP (Border Gateway Protocol) is the internet’s constantly updating, dynamic map. It is the postal service of the web. It allows internet service providers, telecom operators, and tech giants to announce to the world, "Hey, if you want to reach this specific IP address, send the traffic my way, I know the fastest route!"

BYOIP (Bring Your Own IP) is exactly what it sounds like. Imagine you have a highly recognizable, premium 1-800 phone number for your business. When you switch phone carriers, you want to keep that exact number. In the networking world, BYOIP allows giant enterprises to bring their own massive blocks of custom IP addresses to Cloudflare's network. Cloudflare then uses BGP to announce to the global internet that its edge servers are now the optimal, most secure route to reach those specific IPs.

On February 20, Cloudflare’s automated systems accidentally took a massive digital eraser to that BGP map.

The Catalyst: "Code Orange" and the Empty String Bug

To understand why this happened, we have to look at how Cloudflare manages its infrastructure. Cloudflare had recently initiated a sweeping engineering project called "Code Orange: Fail Small." The goal was noble and necessary: eliminate manual, human-error-prone tasks by replacing them with automated, safely tested code deployments.

One manual task slated for automation was the "cleanup" process—the removal of old, unwanted BYOIP prefixes from the network when a customer no longer needed them. Engineers built an automated sub-task to periodically sweep the system's Addressing API, check for any IP prefixes flagged for removal, and delete them.

Here is exactly where the catastrophe occurred. The automated task-runner system made a query to Cloudflare's API that looked like this:

resp, err := d.doRequest(ctx, http.MethodGet, `/v1/prefixes?pending_delete`, nil)

And here is the relevant part of the API server implementation that received that request:

if v := req.URL.Query().Get("pending_delete"); v != "" {

// ignore other behavior and fetch pending objects from the ip_prefixes_deleted table

prefixes, err := c.RO().IPPrefixes().FetchPrefixesPendingDeletion(ctx)

if err != nil {

api.RenderError(ctx, w, ErrInternalError)

return

}

api.Render(ctx, w, http.StatusOK, renderIPPrefixAPIResponse(prefixes, nil))

return

}

If you look closely at the API query, the client is passing pending_delete with absolutely no value attached to it (it doesn't say pending_delete=true). Because of this, when the API server executed Query().Get("pending_delete"), the result was an empty string ("").

Because the code explicitly checked if v != "" (if the value is not an empty string), the logic completely bypassed the intended safeguard. The server interpreted this poorly formatted request not as "find items pending deletion," but rather as a blanket request for all BYOIP prefixes.

The system then happily interpreted the returned list of every single BYOIP prefix as the target list for deletion. In a matter of minutes, the automated task systematically wiped out approximately 1,100 BYOIP prefixes—25% of Cloudflare’s entire global BYOIP network. It didn't just withdraw the routes; it began shredding the dependent service bindings that connected those IP addresses to Cloudflare's core CDN, Magic Transit, and Spectrum services.

Why didn't testing catch this? Cloudflare's staging environment simulated what a human customer would do via the self-service dashboard. The tests did not cover a scenario where the automated task-runner service would independently execute malformed queries without explicit human input.

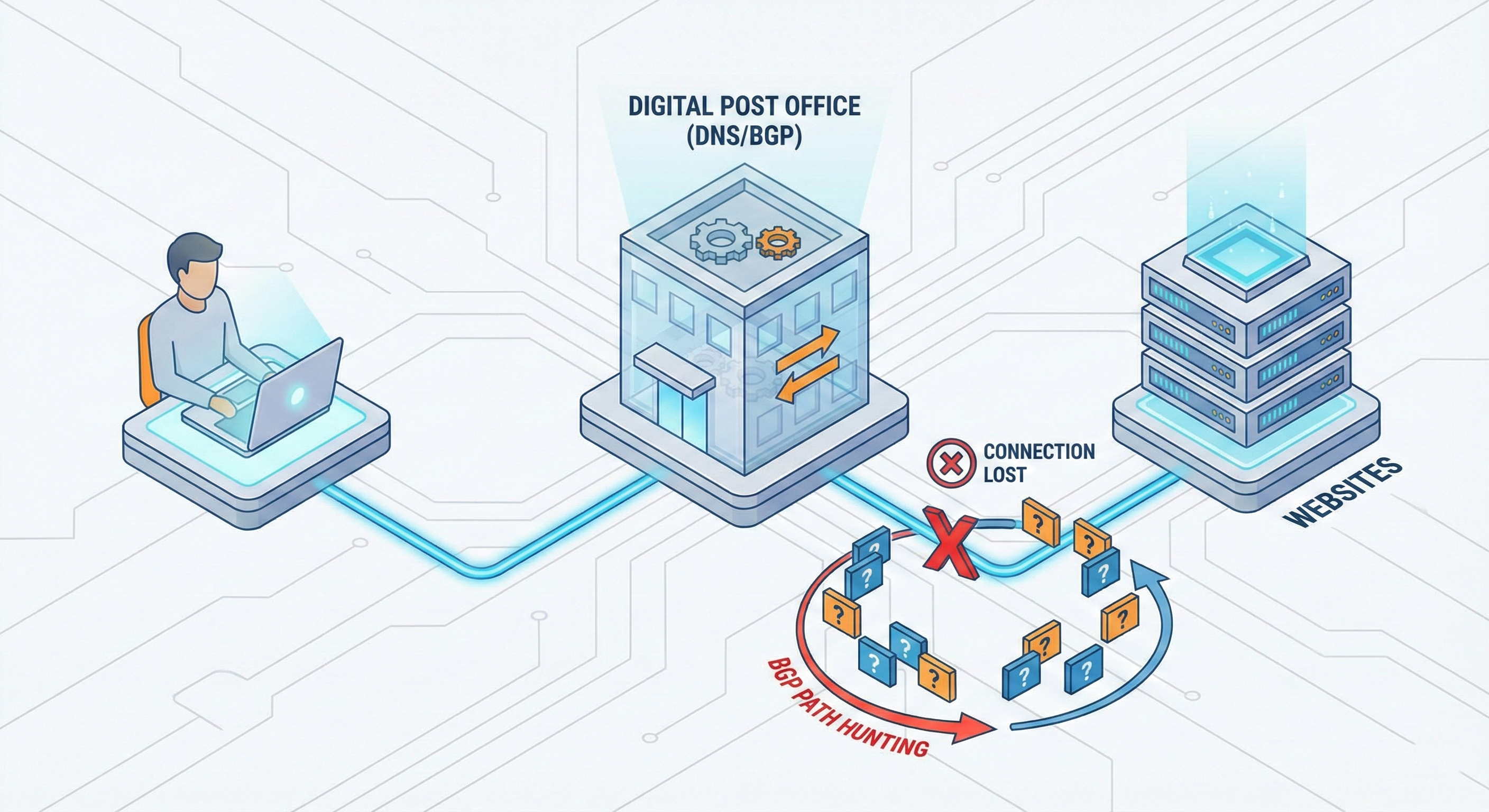

The anatomy of BGP Path Hunting. When Cloudflare's API bug accidentally deleted BGP routes, global traffic bounced endlessly between routers trying to find a destination that no longer existed on the map, resulting in massive connection timeouts.

The anatomy of BGP Path Hunting. When Cloudflare's API bug accidentally deleted BGP routes, global traffic bounced endlessly between routers trying to find a destination that no longer existed on the map, resulting in massive connection timeouts.

The Blast Radius: BGP Path Hunting

At 17:56 UTC, the internet began to fracture. When those 1,100 routes were deleted, global traffic hit a phenomenon known as BGP Path Hunting.

Imagine driving to a destination, but the main bridge is suddenly gone. You take a detour, but that road is a dead end. You try another, and another. In the networking world, when a prefix is suddenly withdrawn, routers across the globe don't immediately give up. They frantically pass traffic back and forth, exploring secondary and tertiary routes, hunting for a path to these vanished IP addresses. They will continue this exhaustive search until the connections simply time out and fail.

From the user's perspective, websites infinitely loaded before throwing error screens. Even visitors trying to reach Cloudflare’s own 1.1.1.1 DNS resolver website were met with HTTP 403 errors and an "Edge IP Restricted" message, completely locked out of the service.

The Grueling Road to Recovery

Why did it take over six hours to fix a single bug? Because in the digital world, destruction is instantaneous, but reconstruction requires immense precision.

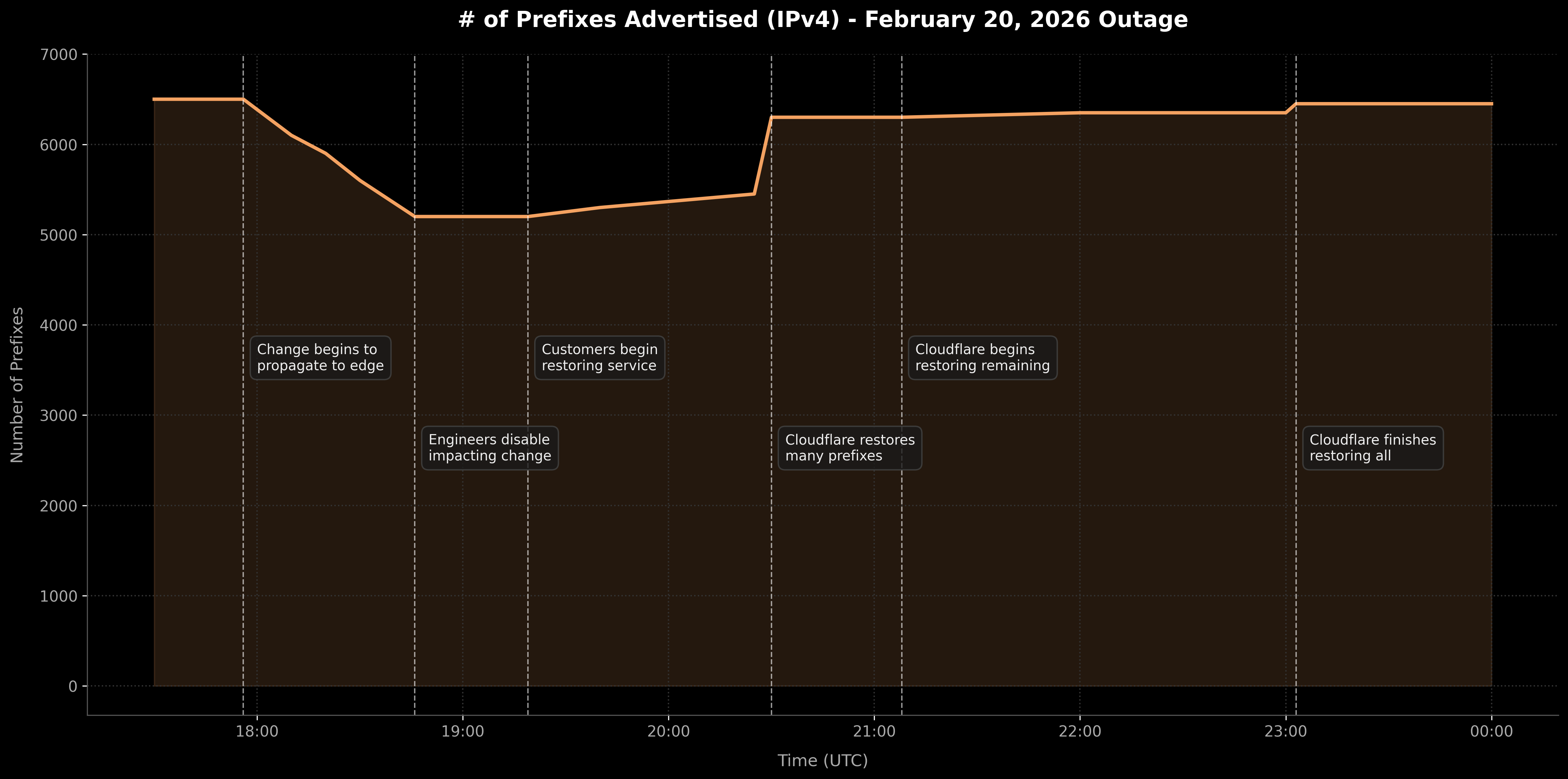

The timeline of the February 20, 2026 BGP incident. Notice the sharp drop at 17:56 UTC as the automated cleanup script withdrew 25% of Cloudflare's BYOIP prefixes, followed by the tiered, multi-hour recovery process.

The timeline of the February 20, 2026 BGP incident. Notice the sharp drop at 17:56 UTC as the automated cleanup script withdrew 25% of Cloudflare's BYOIP prefixes, followed by the tiered, multi-hour recovery process.

When the buggy script fired, it damaged customer accounts to varying degrees, breaking the recovery process into frustratingly complex tiers:

- The "Lucky" Ones: For the majority of impacted customers, only their BGP advertisement was withdrawn. Once Cloudflare engineers identified and killed the rogue script at 18:46 UTC, these customers were able to log into their Cloudflare dashboards, manually toggle their IP advertisements back on, and restore their own service.

- The Hard Cases: For about 300 prefixes, the bug had dug much deeper. It had systematically deleted everything—including the foundational service bindings that tell Cloudflare's servers what to actually do with the traffic once it arrives. For these customers, flipping a switch on a dashboard did nothing, because the edge servers had literally forgotten what those IP addresses were used for.

To fix these severely impacted accounts, Cloudflare engineers could not just revert a single line of code. They had to manually dig into authoritative databases, reconstruct the deleted configurations from historical state data, and initiate a massive, coordinated global update to push those restored bindings to every single edge server in their network. Global service was finally fully restored at 23:03 UTC.

A Pattern of Fragility: History of Self-Inflicted Wounds

While Cloudflare's infrastructure is incredibly robust and fends off massive cyberattacks daily, this is not the first time a tiny internal error has brought vast swaths of the internet to its knees. The irony of modern web infrastructure is that it is often most vulnerable to the people building it.

- July 2019 (The WAF Bug): Cloudflare deployed a single, poorly written Regular Expression (RegEx) rule to their Web Application Firewall. This caused CPU usage on their edge servers to instantly spike to 100%, dropping 82% of global traffic for nearly 30 minutes.

- July 2020 (The Atlanta Router): A minor configuration error by an engineer on a router in Atlanta accidentally told the global network to route all traffic through that single, localized point. The backbone immediately collapsed under the pressure, causing a 50% drop in traffic for 27 minutes.

- June 2022 (The BGP Spike): A routine network change across 19 data centers caused a massive spike in BGP announcements. The system became overwhelmed, causing a massive disruption to millions of users globally for over an hour.

In almost every major incident, it wasn't hackers that took down the internet—it was well-intentioned engineers deploying code that behaved unexpectedly at scale.

The internet's reliance on centralized infrastructure is a double-edged sword. While mega-providers like Cloudflare act as massive shields against digital storms and cyberattacks, a single internal configuration error can bring the very servers they protect entirely offline.

The internet's reliance on centralized infrastructure is a double-edged sword. While mega-providers like Cloudflare act as massive shields against digital storms and cyberattacks, a single internal configuration error can bring the very servers they protect entirely offline.

How the Internet Must Adapt

We can no longer afford single points of failure. The February 20 incident proves that the internet's architecture must aggressively evolve in response to these mega-outages.

First, enterprises must adopt Multi-CDN & BGP Failover architectures. Relying solely on one provider for core routing is a critical business risk. If Cloudflare unintentionally withdraws a route, automated enterprise systems should be designed to seamlessly failover to AWS, Fastly, or Akamai within seconds, bypassing the outage entirely.

Second, the industry needs Circuit Breakers for BGP. Cloudflare noted in their post-mortem that they are developing internal circuit breakers to detect abnormally fast BGP route deletions. The broader internet community—especially Tier 1 ISPs—must adopt similar heuristic monitoring. If 25% of a major provider's routes vanish in 10 minutes, the global network should automatically freeze the previous known-good state, throttle the deletions, and demand human verification before updating the global map.

Finally, we must establish a strict separation between operational state and configured state. Cloudflare recognized that their API database was acting as both the source of truth for customer configuration and the live operational database. Going forward, they are implementing database snapshots. Changes will be staged, snapshotted, and deployed using health-mediated metrics. If a bug begins wiping data, the system can instantly revert to the last known-good snapshot, rather than forcing engineers into a grueling six-hour manual rebuild.

Conclusion

Cloudflare’s "Code Orange: Fail Small" ironically resulted in failing incredibly big. However, their transparency in the aftermath remains a gold standard for the tech industry. By openly sharing the exact code that failed and detailing their architectural missteps, they provide a learning opportunity for every network engineer on the planet.

Yet, the February 20 outage serves as a stark, humbling reminder. The modern internet often feels like an immovable, invincible force of nature. But just beneath the surface, it is held together by fragile logic, trusting protocols from the 1990s, empty strings of code, and the continuous, exhaustive vigilance of human engineers.